EU AI Act: What Hungarian Business Leaders Need to Know — 4 Months to Go

On August 2, 2026, the most important provisions of the EU AI Act will take effect: the rules governing high-risk AI systems, transparency requirements, and the mandatory AI inventory. The penalty? Up to €35 million or 7% of global revenue. You have four months. I’ll show you what you need to do—and what we’ve done at Gloster.

Over the past few weeks, at least six Hungarian business leaders have asked me the same question: “Viktor, does the EU AI Act apply to me?” The short answer is: if you use any kind of AI in your business—whether it’s scoring in CRM, filtering in HR, chatbots in customer service, or image generation in marketing—then yes, it does apply to you.

The EU AI Act in 60 seconds

The EU AI Act entered into force on August 1, 2024, but the requirements are being phased in gradually. In February 2025, the provisions on prohibited AI practices and the AI literacy requirement took effect. Starting in August 2025, the rules for general-purpose AI models (GPAI) will apply. The major milestone is August 2, 2026 —from this date, the full set of obligations regarding high-risk AI systems under Annex III and transparency rules will apply.

In November 2025, the European Commission proposed a “Digital Omnibus” package that would postpone certain high-risk obligations until December 2027—but this has not yet been adopted. Those who wait for this are taking a risk. The EU AI Act applies to Hungarian companies as well—regardless of their size.

The four risk levels of the EU AI Act — and which one you fall into

The AI Act does not regulate everything in the same way. It takes a risk-based approach.

Unacceptable risks (prohibited): social scoring, subconscious manipulation, certain uses of biometrics. These have been prohibited since February 2025.

High risk (Annex III): AI in recruitment, credit assessment, education, law enforcement, and employee management. The August 2026 deadline applies to these areas—mandatory documentation, transparency, human oversight, and market monitoring.

Limited risk: chatbots, generative AI, deepfakes, emotion-recognition systems. Main requirement: the user must be aware that they are communicating with AI. Generative AI content must be labeled. This requirement also takes effect on August 2, 2026.

Minimal risk: spam filters, recommendation systems, inventory management AI. There are no specific obligations under the AI Act, but the GDPR and consumer protection laws remain in effect.

Most Hungarian SMEs and mid-sized companies fall somewhere between “limited” and “high” risk. If you use AI-based screening in HR or run a chatbot in your customer service department—that’s not a minimal risk.

You’re a “deployer”—and that’s important

The AI Act distinguishes between the developer (provider) and the user (deployer). The vast majority of Hungarian companies are deployers: they do not develop AI models themselves, but use others’ products as SaaS or APIs.

But the deployer also has obligations when it comes to high-risk systems: they must ensure human oversight, inform data subjects, and conduct a fundamental rights impact assessment (FRIA) in certain cases. And if you significantly modify the AI system—say, by fine-tuning it with your own data or using it for a different purpose—you become a provider, and all developer obligations fall on you.

According to a survey conducted by the Center for Data Innovation at the end of 2025, less than 30 percent of European SMEs have begun preparing for the AI Act. A strategic mistake.

The penalties — no joke

The numbers speak for themselves:

- 35 million euros, or 7% of global revenue — for violating prohibited AI practices

- 15 million euros or 3% — for breaches of obligations regarding high-risk systems

- 7.5 million euros, or 1.5% — for providing incorrect data to the authorities

For SMEs and startups, the penalties are proportional—revenue-based caps apply, not fixed amounts. But “proportional” does not mean “negligible.”

What You Need to Do — 5 Specific Steps

1. Create an AI inventory. List every AI system your company uses. SaaS, APIs, built-in features—everything. Most companies don’t even realize how many places AI is running within their processes. From ChatGPT subscriptions to CRM lead scoring to chatbots—it all counts.

2. Risk classification. Classify each identified system into one of the four levels. If you are unsure: AI used in recruitment, employee evaluations, credit assessments, and access to essential services is typically high-risk.

3. Supplier Questionnaire. Ask all your AI suppliers: Do they have technical documentation in accordance with Annex IV? What data was used to train the model? What human oversight mechanisms do they provide? Have they registered the system in the EU database? Any supplier unable to answer these questions poses a compliance risk.

4. Compliance with transparency rules. If you operate a chatbot: users must know that they are interacting with AI. If content is generated using generative AI: it must be labeled as such. This will be mandatory at all risk levels starting in August 2026.

5. Appoint a point person. Designate one person within the organization to be responsible for AI compliance. You don’t need a separate legal department—but you do need someone to maintain the AI inventory, review classifications, and hold vendors accountable.

What we did at Gloster

Gloster is a publicly traded IT services company with 340 employees—which means we are both AI implementers (for our own operations) and AI consultants (for our clients). Because of this dual role, we had to act sooner.

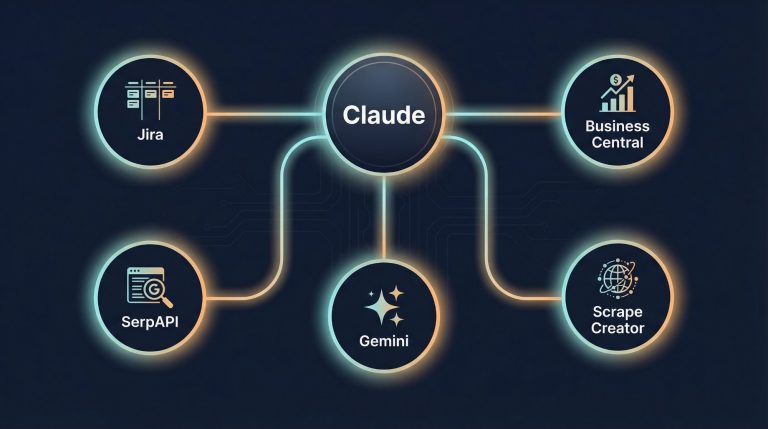

For us, AI governance isn’t just a document—it’s a working practice. We have 23 AI skills running in our daily operations, and for each one, we’ve defined scopes of authority, approval steps, and an audit trail. When an AI agent sends an email on my behalf, it doesn’t go out without approval. When it retrieves data from the ERP system, it operates with least-privilege permissions. When it audits Jira, it logs what it has viewed.

That is exactly the approach required by the AI Act—only we’re not doing it because we have to, but because it makes sense.

Summary

The EU AI Act is not optional. It doesn’t just apply to tech giants. It’s not going away. The most important requirements take effect in four months—and 70 percent of European SMEs haven’t done anything yet.

The preparation process is straightforward: AI inventory, risk assessment, supplier questionnaire, transparency, and designation of a responsible party. Five steps. Most of them can be completed in a week.

The question isn't whether you should do it. The question is when you'll start. The answer: today.

Frequently Asked Questions (FAQ)

When will the EU AI Act take effect in Hungary?

The EU AI Act will be phased in gradually: rules on prohibited AI practices will take effect in February 2025, rules on general-purpose models in August 2025, and rules on high-risk AI systems on August 2, 2026. In Hungary, the NMHH is responsible for oversight.

Do Hungarian SMEs need an AI audit due to the EU AI Act?

Yes—every company that uses artificial intelligence must inventory its AI tools and classify them by risk level. This is the first step in AI compliance and can be completed in a week.

As a business leader, how should I start preparing for the AI Act?

In five steps: conducting an AI inventory, risk assessment, supplier questionnaire, compliance with transparency rules, and appointing a responsible person. We applied the same approach at the Gloster Group, which has 340 employees.

Does the EU AI Act apply to the use of ChatGPT and Copilot?

Yes. The transparency requirement will apply to all generative AI tools starting in August 2026: AI-generated content must be labeled.

Related articles

- We no longer build chatbots—we build a digital workforce —Gloster’s AI governance practices: 23 skills, audit trail, least privilege—in the spirit of the AI Act

- 7 custom AI connectors that save 15–20 hours of work per week — The first step in AI inventory: which AI systems are running in daily operations